TL;DR

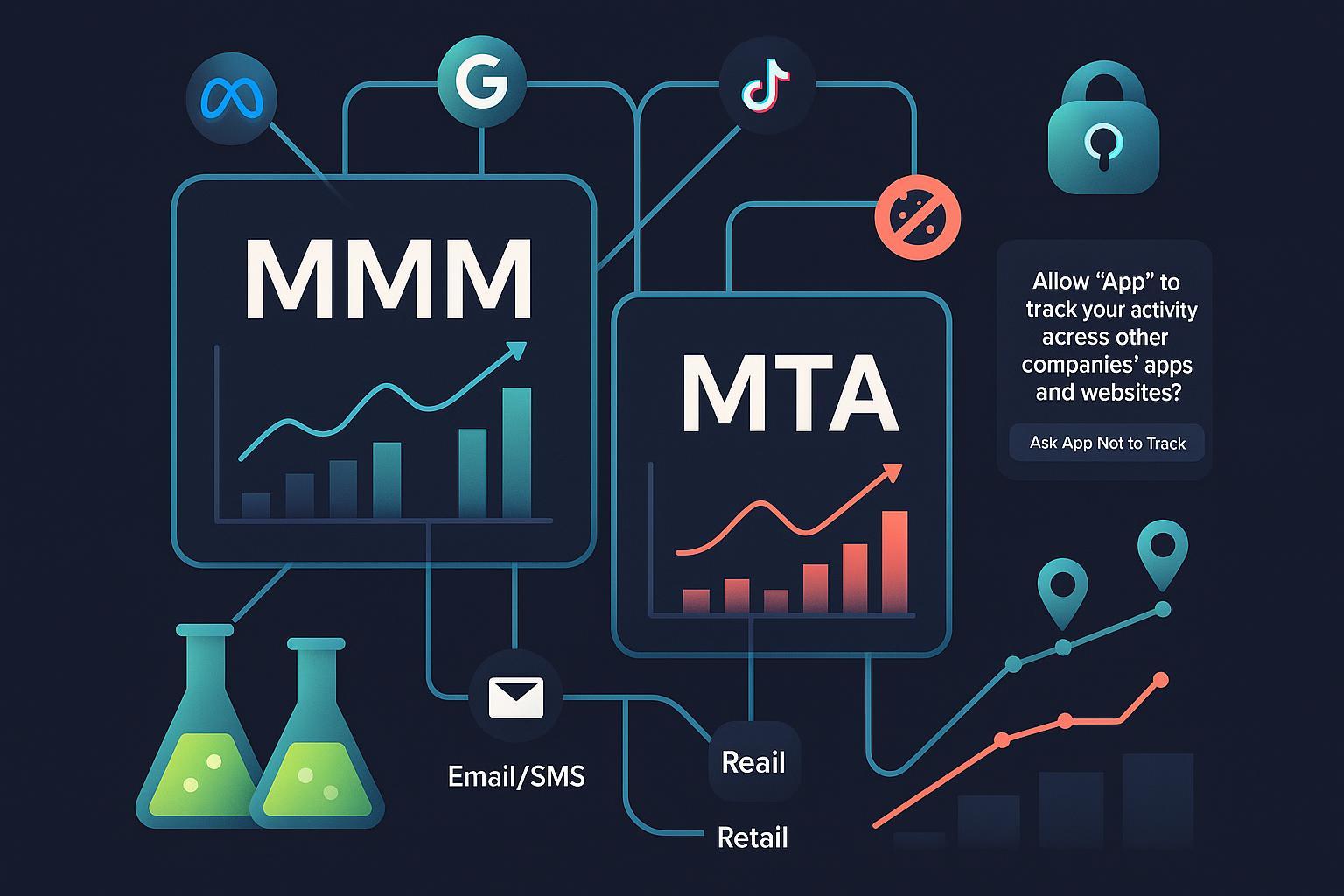

- In 2025, neither MMM nor MTA is a silver bullet. Use MMM for strategic allocation and long‑term planning; use MTA tactically for within‑channel optimization where you still have signal.

- The most reliable path for DTC brands is hybrid: MMM + experiments (geo/lift) + selective MTA + clean rooms. Validate early and often.

- If you spend < $5M/year and rely on Meta + Google, lean on platform experiments plus a lightweight MMM (e.g., Robyn) for budget splits; use GA4’s DDA for quick creative/campaign tweaks.

- If you spend $5–30M/year across Meta, Google, TikTok, affiliates, and email/SMS, adopt a Bayesian MMM (e.g., Meridian or PyMC‑Marketing) calibrated with lift tests; keep MTA for web/Android and use clean rooms for walled gardens.

- If you’re > $30M/year and adding retail/TV, invest in enterprise MMM with geo‑hierarchical modeling and continuous incrementality testing; constrain MTA to specific touchpoints with sufficient signal.

Why this debate looks different in 2025

- Apple’s ATT (iOS 14.5) and SKAdNetwork reduced user‑level visibility on iOS, and third‑party cookies are being deprecated, which shifts MTA toward modeled/probabilistic signals and aggregated reporting. Apple documents SKAdNetwork 4 features like multi‑postbacks, coarse vs. fine conversion values, and privacy thresholds that limit granularity on iOS, requiring careful schema design for optimization and reporting, per the official Apple AdAttributionKit documentation and related pages (2023–2025).

- GA4’s data‑driven attribution is now the default multi‑touch model in Google Analytics, but under consent and ITP/ATT constraints it relies on modeled conversions; Google’s help center explains how credit is assigned and how lookback windows work (30–90 days), emphasizing that sufficient volume is needed or GA4 falls back to simpler models, as described in the GA4 attribution model overview (2024) and Attribution settings API reference (2024).

- Meanwhile, modern MMM has matured. Google launched Meridian in 2025 as an open‑source Bayesian MMM that supports experiment calibration, hierarchical modeling, and budget optimization, detailed in the Google Meridian launch blog (2025) and Meridian documentation (2025). Open‑source options like Meta’s Robyn repository (active through 2024–2025) and PyMC‑Marketing offer credible, inspectable approaches for in‑house teams.

How MMM works today (and what it gives DTC marketers)

- Philosophy: Top‑down, aggregated modeling to estimate incremental impact by channel/tactic. MMM handles diminishing returns, carryover (adstock), seasonality, promotions, and prices to infer how spend changes outcomes.

- Inputs you’ll need: Daily/weekly spend or exposures (impressions/clicks), outcomes (revenue, orders, new customers), promo flags, seasonality/holiday variables, price/discount indices, and ideally reach/frequency. Meridian explicitly supports Bayesian priors, adstock, saturation, experiment calibration, and geo‑hierarchical models, per the Meridian docs on Bayesian inference (2025) and its data platform guidance (2025). Robyn similarly supports adstock, saturation, lift calibration, and allocator tools as documented in its README and releases (2024–2025).

- Outputs you can act on: Channel/tactic‑level ROI with credible intervals, elasticities, and scenario planners to simulate “what if we move $X from TikTok to YouTube?” Meridian’s open‑source stack includes budget optimization and uncertainty metrics, per its About the project page (2025).

- Strengths in 2025: Resilient to privacy loss (aggregated data), captures incrementality when well specified and calibrated with experiments, and informs cross‑channel budget allocation. Suitable even when user‑level data is fragmented.

- Limitations to respect: Risk of misspecification (omitted variables like promotions), multicollinearity among correlated channels, and mis‑modeled carryover/saturation. Needs enough temporal variation and 18–24 months of data for stability. Calibration with experiments (geo/lift) is critical.

How MTA works now (and where it still shines)

- Philosophy: Bottom‑up credit across observed touchpoints. In 2025 this includes a mix of first‑party clickstream, platform APIs, server‑side tagging, SKAN postbacks on iOS, and clean‑room joins for walled gardens.

- Building blocks:

- GA4’s data‑driven attribution (DDA) with configurable lookback windows (30–90 days) and modeled conversions under consent gaps, per the GA4 DDA overview (2024) and Attribution settings reference (2024).

- Clean rooms that allow privacy‑safe analysis of event‑level ad data joined to your first‑party data—Google’s Ads Data Hub introduction and Amazon’s Marketing Cloud guide describe aggregation thresholds and pathing capabilities (2024–2025).

- Meta’s Aggregated Event Measurement limits iOS event granularity and prioritizes up to 8 web/app events per domain or app, described in the Meta AEM developer guides (2024). On iOS, SKAdNetwork 4 provides delayed, aggregated postbacks with privacy thresholds and coarse vs. fine conversion values, per Apple’s AdAttributionKit/SKAN docs (2023–2025).

- Where MTA is useful in DTC:

- Creative and keyword optimization on web/Android where you still observe journeys.

- Within‑platform decisions (e.g., shifting budget between Meta ad sets) using platform‑native signals plus your own conversion quality checks.

- Retention and lifecycle messaging (email/SMS) where deterministic IDs exist.

- Limitations to respect: Systematic blind spots on iOS/Safari; deduping challenges across walled gardens; last‑touch bias when signals are sparse; and probabilistic modeling that can be brittle without rigorous QA.

Head‑to‑head: What to trust for which job

- Accuracy and bias profile

- MMM: Estimates causal, incremental effects when the model is well specified and calibrated with experiments. Robust to ATT/cookie loss because it uses aggregated data. Still vulnerable to misspecification and correlated inputs.

- MTA: Precise on observable paths, but structurally biased wherever visibility is missing (iOS, walled gardens). Treat DDA as probabilistic; validate with experiments.

- Granularity and actionability

- MMM: Channel/tactic/geo granularity. Great for quarterly budget allocation and scenario planning; less suited for day‑to‑day creative rotation.

- MTA: Campaign/ad/keyword granularity. Great for near‑real‑time tweaks where you have signal; poor for total channel value when journeys are partially hidden.

- Speed, cost, and staffing

- MMM: Expect 6–10 weeks to first model with a vendor or advanced in‑house stack; refresh monthly or quarterly. Open source has lowered barriers—see Google Meridian docs (2025) and Meta Robyn (2024–2025)—but you still need DS/analytics.

- MTA: Faster to stand up for web/Android, but cross‑platform and clean room work increases cost/complexity. Requires disciplined data engineering, naming, and consent management.

- Data requirements

- MMM: 18–24+ months of weekly data, including spend/exposure, outcomes, and controls for promotions and seasonality; reach/frequency if available. Geo data accelerates learnings via hierarchical models, per Meridian’s capabilities (2025).

- MTA: Consistent UTMs, consented IDs, server‑side tagging, CRM/CDP joins, platform postbacks (SKAN), and clean‑room access to analyze walled garden signals.

Data, costs, and timelines (ballpark)

- MMM

- Vendor‑led: ~$10k–$100k+ with 6–10 weeks to first model; ongoing refresh monthly/quarterly.

- In‑house: Software costs can be low (open source), but total cost often comparable once staffing and productionization are included; timelines commonly 3–6 months to reach stable operations.

- MTA and clean rooms

- Initial implementation across channels and clean rooms can run ~$50k–$200k+ and take 2–6+ months, depending on scope and privacy reviews; ongoing query/platform costs apply. Note: These are indicative ranges based on industry practice; validate with your vendors and internal teams.

Decision guidance by stage, data maturity, and channel mix

- Early‑stage DTC (< $5M annual ad spend), heavy Meta + Google, limited DS

- What to trust: Platform experiments (Meta Conversion Lift when eligible; Google geo experiments) to get iROAS and incrementality; a lightweight MMM (Robyn) for allocation.

- How to run it: Keep GA4 clean and use server‑side tagging; standardize UTMs; run quarterly lift tests on your biggest channel; refresh MMM quarterly.

- Where MTA helps: GA4 DDA for creative/campaign‑level decisions; avoid over‑reading totals on iOS.

- Scaling DTC ($5–30M), multi‑channel (Meta, Google, TikTok, affiliates), some offline

- What to trust: Bayesian MMM (Meridian or PyMC‑Marketing) calibrated with lift/geo tests; clean rooms (ADH, AMC) for walled gardens; triangulate with MTA on web/Android.

- How to run it: Maintain a measurement calendar; run at least one geo experiment per quarter; enforce naming/UTM governance; document MMM priors and elasticities.

- Where MTA helps: Creative rotation, keyword budgets, funnel diagnostics where IDs exist.

- Mature DTC (>$30M), omnichannel + retail/CTV

- What to trust: Enterprise MMM with geo‑hierarchical models and reach/frequency inputs; continuous incrementality testing.

- How to run it: Integrate promo calendars, price indices, and retail signals; use clean rooms for cross‑publisher reach/overlap; limit MTA to deterministic surfaces.

- Where MTA helps: Specific touchpoints (e.g., brand search cannibalization checks, CRM journeys), not the full picture.

Hybrid playbooks you can copy

- Playbook 1: MMM + quarterly lift tests + GA4 DDA for tactics

- Build a Robyn/Meridian model with 24 months of weekly data. Calibrate Meta and YouTube with lift/geo results (e.g., iROAS priors). Compare MMM elasticities to lift outcomes; adjust priors/controls if gaps persist.

- Use GA4 DDA to reallocate creative/campaign budget within each channel weekly; do not use DDA to set total channel budgets.

- Rinse monthly: refresh MMM input data; update scenario planner; lock 4–6 week budget changes aligned to MMM; keep day‑to‑day tweaks inside channels via MTA.

- Playbook 2: Clean rooms for pathing + MMM for allocation

- In ADH, analyze assisted paths and reach/frequency; export only aggregated insights per Google’s privacy thresholds outlined in the Ads Data Hub introduction (2024–2025).

- Feed path insights (e.g., the role of YouTube view‑through) into MMM as additional controls or priors; test uplift with geo experiments recommended by the Think with Google Modern Measurement Playbook (2023).

- Playbook 3: iOS‑heavy brand mitigation

- Design SKAN 4 conversion schemas that map to value tiers (e.g., registration, first purchase) recognizing coarse/fine values and privacy thresholds per Apple’s conversion value docs (2023–2025).

- Expect delayed, aggregated postbacks and plan optimization windows accordingly; use MMM and lift tests to estimate true iOS incrementality.

- Keep MTA focused on Android/web and lifecycle channels; reconcile iOS via experiments + MMM.

Validation and calibration: how to keep yourself honest

- Run randomized geo experiments at least quarterly on major channels. Google’s experiment playbooks describe how to randomize markets, avoid contamination, and size duration for power, as detailed in the Think with Google Experiments Playbook (2023) and incrementality testing guidance (2022–2023).

- Where available, use Meta Conversion Lift for large enough campaigns; calibrate your Meta MMM priors to the observed lift. (Meta’s official pages vary; check the Business Help Center for the most current eligibility and setup.)

- Triangulate: Compare MMM elasticities to lift results; compare MTA assisted paths in ADH/AMC to MMM share of contribution; investigate persistent gaps with additional tests.

Governance and QA checklist for 2025

- Standardize UTMs and campaign naming; avoid “(not set)” and high‑cardinality chaos in GA4 by following the GA4 tagging best practices (2024) and related guidance on custom dimensions/cardinality (2024).

- Centralize first‑party data in a warehouse; use server‑side tagging and GA4 Measurement Protocol where allowed, following the Measurement Protocol documentation (2024–2025). Ensure consent signals are honored.

- Maintain a measurement calendar: planned experiments, MMM refreshes, promotions, and retail events; document assumptions, priors, elasticities, and confidence intervals.

- Institute quarterly calibration: at least one lift/geo test per major channel; review MMM diagnostics (MCMC convergence, residuals, out‑of‑sample error); sanity‑check MTA trends against consent/ID coverage.

- Keep clean room guardrails: respect aggregation thresholds in ADH and AMC as described in the ADH docs and AMC guide (2024–2025). Export aggregated results only; document query templates and QA.

Common pitfalls to avoid

- Treating MTA as ground truth when 40–60% of journeys are partially hidden on iOS/Safari—your totals will be biased even if within‑channel moves look good.

- Running MMM without promotions/pricing controls or with too little temporal variation—this inflates certain channels and erodes trust.

- Double counting: Adding MTA totals to MMM contributions or reading platform ROAS as incremental.

- Ignoring uncertainty: MMM without credible intervals and MTA without confidence bands lead to false precision and over‑optimization.

- Skipping validation: No lift/geo tests means no anchor for reality, especially for brand search and view‑through channels.

FAQs

- Is MMM replacing MTA? No. MMM answers “how much incremental value does each channel/tactic create?”; MTA answers “which creatives/keywords/campaigns look best among the touchpoints we can observe?” Use them together.

- Can GA4’s DDA be used for budget allocation? Use it cautiously. It’s helpful for within‑channel moves but, per Google’s own documentation (2024), DDA relies on modeled conversions under consent gaps; calibrate with experiments and MMM before making big cross‑channel shifts.

- Do we need 24 months of data for MMM? More is better. Many teams target 18–24 months of weekly data to stabilize seasonality and adstock, a practice reflected in open‑source MMM docs like Meridian (2025) and community guidance in Robyn (2024–2025).

- What if we’re influencer/affiliate‑heavy? Expect MTA gaps due to off‑platform and dark‑social effects. Use unique codes/links for experiments, bring spend into MMM with careful controls, and run geo tests around creator pushes to estimate incrementality.

- How do clean rooms fit in? Use ADH/AMC for privacy‑safe pathing and reach/overlap, then feed insights into MMM and experimentation, per Google’s ADH intro (2024–2025) and Amazon’s AMC guide (2024–2025).

Key takeaways

- Trust MMM for strategic allocation and long‑term planning.

- Trust MTA for near‑term, within‑channel optimization where you have signal.

- In 2025, a hybrid approach—MMM + experiments + selective MTA + clean rooms—gives DTC brands the most reliable, privacy‑resilient measurement stack.

References and further reading

- Google (2025): Meridian launch announcement and Meridian documentation

- Meta (2024–2025): Robyn open‑source MMM repository

- PyMC Labs (2024–2025): PyMC‑Marketing repository

- Google Analytics (2024): GA4 DDA overview and Attribution settings

- Apple (2023–2025): AdAttributionKit and SKAdNetwork docs

- Google (2023): Think with Google Modern Measurement Playbook and Experiments Playbook

- Google (2024–2025): Ads Data Hub introduction

- Amazon Ads (2024–2025): Amazon Marketing Cloud guide