If you run post‑purchase offers in 2025 (thank‑you page modals, order status page tiles, confirmation email/SMS), this guide shows exactly how to measure the true incremental uplift—attach rate, revenue, and margin—without heavy math. Expect to plan in a few hours, instrument in a day, and run for 2–3 weeks for a confident read.

Who this is for

- D2C brands and retailers using Shopify or BigCommerce, plus GA4/Amplitude/Mixpanel and a BI tool (Looker/Power BI/Sheets).

- Growth, product, and data practitioners comfortable with basic analytics and experiments.

What you’ll get

- A step‑by‑step framework to quantify attach rate uplift post‑purchase.

- Practical experiment designs (A/B holdout, ghost/placebo, geo/switchback) you can implement this month.

- Clear formulas, a 2025 worked example, QA checklists, and guardrails.

—

Step 0: Definitions and prerequisites

Key terms (keep these consistent in your BI model):

- Eligible order: An order that meets your predefined rules (country, category, payment method, price band, inventory on hand).

- Exposure: The offer was actually rendered to the user (set an exposure_flag when your UI/modal loads).

- Acceptance: The user accepted the offer (set accept_flag on click/confirm).

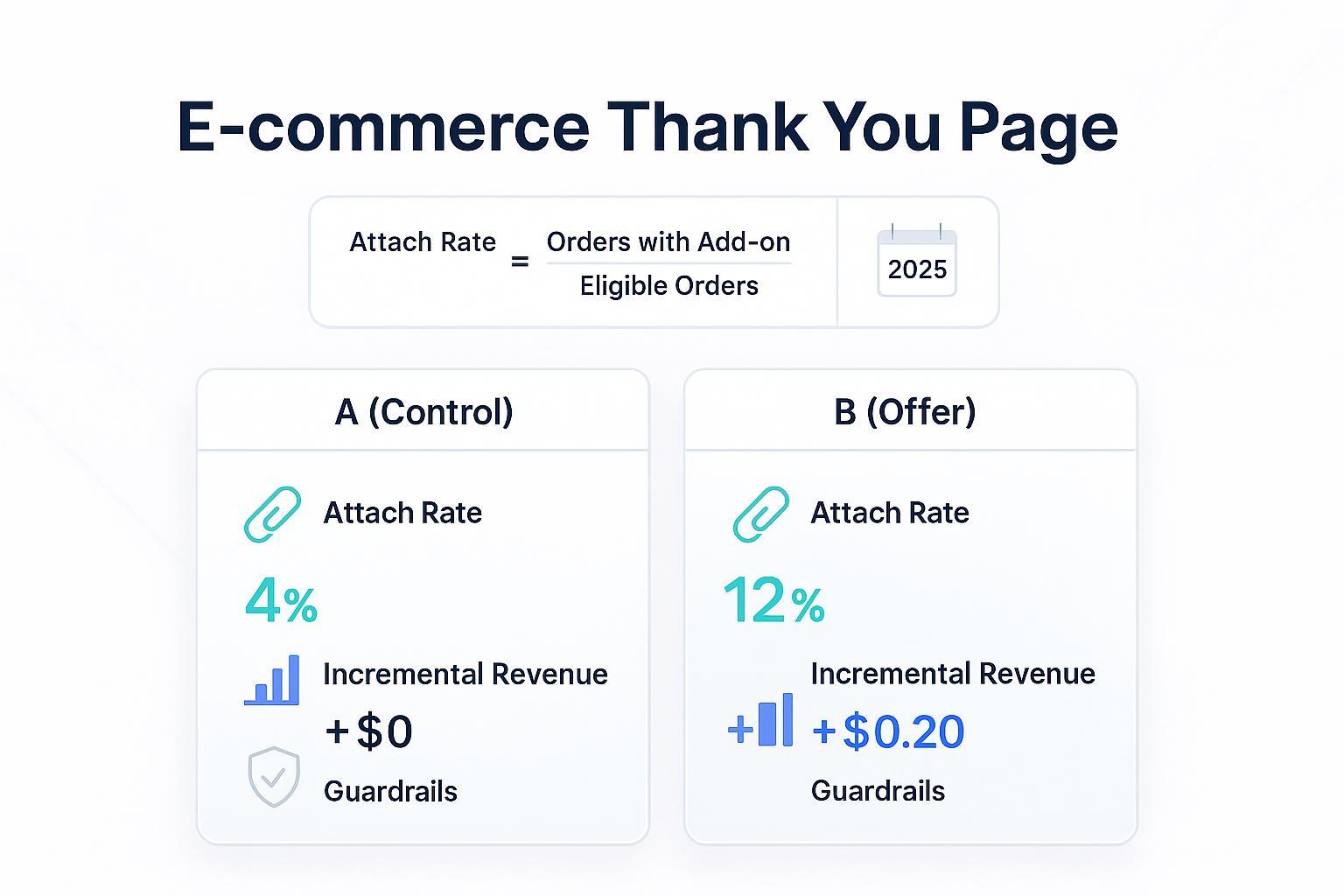

- Attach rate (order‑level): orders_with_addon / eligible_orders.

- Incremental attach rate (Δattach): attach_rate_treatment − attach_rate_control.

- Incremental revenue per eligible order (IREO): (addon_revenue_T − addon_revenue_C) / eligible_orders.

- Incremental margin per eligible order (IMEO): (addon_margin_T − addon_margin_C) / eligible_orders.

- Treatment‑on‑the‑treated revenue per adopter (ToTT): IREO / attach_rate_T.

Tools you’ll need

- Shopify Checkout UI Extensions or BigCommerce order confirmation template access to log exposure/accept events. See Shopify’s Checkout UI Extensions overview and targets in the Thank you page to instrument render and click events according to the 2024–2025 docs: Shopify Checkout UI Extensions and Customize footer/Thank you targets. BigCommerce stores can log on the order confirmation template and stitch via APIs and webhooks, using the BigCommerce GraphQL Storefront reference.

- GA4 eCommerce configuration to tag add‑ons distinctly in the purchase event (e.g., item_type = "add_on") per Google’s current guidance on the purchase event and items arrays: GA4 eCommerce events.

- A BI workspace (Looker/Power BI/Sheets) to compute metrics and visualize guardrails.

Attribution windows

- On‑site post‑purchase modal or order status page: attribute within the same session or up to 24 hours.

- Email/SMS post‑purchase: use a fixed window, typically 5 days for clicks per Klaviyo’s 2024–2025 guidance in their Klaviyo attribution updates. Keep windows conservative, and net out returns that occur inside the window.

—

Step 1: Set your goal and pass/fail thresholds

Decide in advance what “good” looks like so you don’t over‑interpret noise.

- Example 2025 thresholds:

- Δattach ≥ 0.8 percentage points.

- IMEO ≥ $0.40 per eligible order (margin, not revenue).

- Guardrails neutral or positive (no meaningful degradation).

Guardrails to monitor

- Checkout latency, payment failures, refunds/returns, chargebacks, CS contacts, and non‑add‑on AOV to catch cannibalization. For structure on guardrails in experiments, see Statsig’s 2024–2025 discussion of guardrail metrics in A/B tests in Statsig on guardrails.

—

Step 2: Lock eligibility and choose offer surfaces

Define eligibility once and freeze it for the test duration to avoid drift.

- Typical rules: in‑stock add‑on only; exclude preorder/backorder; US/CA only; exclude COD; exclude orders with heavy promo codes.

- Offer surfaces: Thank‑you page modal, order status page component, confirmation email/SMS within 24–72 hours.

Instrumentation note

- For Shopify, render exposure and log with your own endpoint when your Checkout UI Extension loads on the Thank you page (targets and capabilities are documented in 2024–2025 Shopify docs such as Customize footer/Thank you targets and the Instructions API).

- For BigCommerce, add logging JavaScript to the order confirmation template and reconcile via webhooks and the Orders APIs/GraphQL.

—

Step 3: Choose an experiment design you can actually run

Pick the most robust design your stack supports right now:

- Randomized A/B with 10–30% holdout (preferred): Randomly assign at the order or customer level; show offers to treatment only. Optimizely’s experimentation playbooks remain a good operational reference for A/B basics in 2025: Optimizely A/B testing ideas.

- Ghost/placebo control (optional): Show a non‑actionable “ghost” UI frame to a subset to isolate UX friction from the economic value of the offer. This follows placebo‑control principles used in experimentation practice.

- Geo or switchback (when randomization is constrained): Assign by region or alternate exposure by time blocks (e.g., daily on/off) and analyze with difference‑in‑differences per modern experimentation guidance like Statsig’s marketplace series in 2024–2025: Statsig marketplace experiments guide.

Pro tips

- Keep assignment stable for the test duration.

- Avoid exposing the same customer to both groups during the same order flow.

—

Step 4: Plan sample size, power, and duration

You need enough eligible orders to detect your target effect.

- Inputs: baseline attach rate (last 4–8 weeks), desired MDE (e.g., +0.8 pp), α=0.05, power=0.8.

- Use a calculator for proportions to estimate required sample size; a widely used tool is Evan Miller’s proportions calculator (updated and reliable through 2025): Evan Miller sample size. For continuous outcomes and CUPED, Statsig’s updated calculators are helpful: Statsig on determining sample size.

- Run across full business cycles (at least 2 weeks including a weekend) to reduce seasonality.

Checkpoint

- If you can’t reach the sample size in 3–4 weeks, increase holdout share efficiency (e.g., 80/20 instead of 50/50) or broaden eligibility carefully.

—

Step 5: Instrumentation and data model

Capture these fields for every eligible order (store in your warehouse or BI model):

- order_id, customer_id

- eligible_flag, exposure_flag (timestamp when rendered)

- offer_id, variant_id

- accept_flag (timestamp), addon_revenue, addon_cost, addon_margin

- device, channel, geo

- pre_period_order_count, pre_period_spend (for CUPED variance reduction)

- refunds_within_window, returns_flag, attribution_window_days

Platform specifics and references

- Shopify’s Checkout UI Extensions let you render on the Thank you page and log exposures/acceptances from your app; see Shopify Checkout UI Extensions and the Instructions API for capabilities and constraints.

- BigCommerce logging lives in your order confirmation template plus server‑side reconciliation via webhooks/APIs; consult the BigCommerce GraphQL Storefront reference.

- In GA4, include add‑ons as distinct items within the purchase event and add an item‑scope flag (e.g., item_type = "add_on"). Google describes purchase event and items arrays in current guidance: GA4 eCommerce events.

Refunds and returns (net revenue/margin)

- Net out refunds from add‑on revenue/margin using your platform’s refund APIs. Shopify documents refund_line_items and adjustments in their 2024‑07 Admin API: Shopify Refunds API. BigCommerce exposes line‑item and tax/shipping refund amounts in v3 refund endpoints: BigCommerce Refunds API.

—

Step 6: Execute with QA gates

Before you launch, verify the following:

- Exposure integrity: Treatment shows the offer; control does not (or sees ghost frame). Log exposure_flag upon render.

- Double‑offer prevention: Ensure each eligible order sees at most one add‑on offer.

- Inventory and pricing: Don’t offer out‑of‑stock items; confirm price and tax handling.

- Logging completeness: Spot‑check that exposure, accept, revenue, and refund events stitch correctly to orders.

- Performance: Confirm no increase in checkout latency or payment failures.

—

Step 7: Analyze the results (with a 2025 worked example)

Compute these metrics using intent‑to‑treat (ITT: all eligible orders in each group). Use treatment‑on‑the‑treated (ToTT) as a secondary read.

Core formulas (plain text)

- Attach rate = orders_with_addon / eligible_orders

- Δattach = attach_rate_T − attach_rate_C

- IREO = (addon_revenue_T − addon_revenue_C) / eligible_orders

- IMEO = (addon_margin_T − addon_margin_C) / eligible_orders

- ToTT_rev_per_adopter = IREO / attach_rate_T

2025 example

- Period: Aug 5–18, 2025. Eligibility: US orders > $40, in‑stock add‑on A.

- Treatment: 60,000 eligible orders; 3,000 accepted add‑on; $135,000 add‑on revenue; $67,500 add‑on cost; $10,000 refunds within window.

- Control: 20,000 eligible orders; 200 accepted (baseline add‑ons from other channels); $6,000 add‑on revenue; $3,000 cost; $500 refunds.

Calculations

- Net add‑on revenue: T = 135,000 − 10,000 = 125,000; C = 6,000 − 500 = 5,500.

- Add‑on margin: T = 125,000 − 67,500 = 57,500; C = 5,500 − 3,000 = 2,500.

- Attach rates: T = 3,000 / 60,000 = 5.0%; C = 200 / 20,000 = 1.0%; Δattach = 4.0 pp.

- IREO = (125,000 − 5,500) / (60,000 + 20,000) = 119,500 / 80,000 = $1.49 per eligible order.

- IMEO = (57,500 − 2,500) / 80,000 = 55,000 / 80,000 = $0.69 per eligible order.

- ToTT_rev_per_adopter = 1.49 / 0.05 = $29.80 per adopter.

Decision read

- Pass economic thresholds (IMEO $0.69 ≥ $0.40) and strong Δattach (4 pp). Proceed to rollout if guardrails are clean.

Variance reduction with CUPED (optional but useful)

- If you have pre‑period order_count or spend per customer, apply CUPED to reduce variance in continuous outcomes. Optimizely’s explainer outlines the adjusted‑metric approach widely used in 2024–2025: Optimizely on CUPED. Statsig documents operational steps and when it helps most (strong correlation between covariate and outcome): Statsig CUPED method.

Phased rollouts/geo using DiD

- For geo or switchback designs, estimate uplift as (Y_T,post − Y_T,pre) − (Y_C,post − Y_C,pre). Statsig’s marketplace experimentation guide discusses these patterns and cautions (parallel trends, cluster effects) in 2024–2025: Statsig marketplace experiments guide.

Cannibalization and guardrails

- Compare non‑add‑on AOV and core item conversion between treatment and control. Watch returns/CS contacts. For guardrail design thinking, see Statsig on guardrails.

Segmentation and targeting

- Slice by new vs returning, device, traffic source, price tier, and category affinity to find heterogeneous treatment effects. Consider simple uplift targeting next: prioritize segments with higher Δattach and positive IMEO.

—

Step 8: Decide, roll out, and monitor

Pass criteria (example)

- Δattach ≥ 0.8 pp and IMEO ≥ $0.40.

- No material degradation in guardrails (latency, payment failures, refunds, CS contacts).

Rollout playbook

- Start with 50–75% exposure to avoid ops shocks, then move to 90–100% if stable.

- Keep a 5–10% persistent holdout for ongoing measurement.

- Refresh creative/pricing every 4–8 weeks; re‑test after major merchandising changes.

Post‑launch monitoring

- Weekly IMEO trend, refunds net of window, and guardrails (time‑series alerts). For inspiration on time‑series monitoring, see Statsig’s 2024–2025 pulse discussions in Pulse time‑series insights.

—

Troubleshooting and FAQs

-

My attach rate went up, but revenue didn’t. What gives?

- Check discounting and refunds. Ensure you’re using IMEO (margin) not revenue only, and net refunds inside the attribution window via Shopify/BigCommerce refund APIs documented in 2024–2025.

-

Treatment shows worse checkout performance.

- Validate your extension/template is lightweight. If latency increases or payment fails rise, pause and fix. Use placebo/ghost UI to separate UX friction from economic value.

-

I can’t randomize at the user level.

- Use geo clusters or a time‑based switchback and analyze with DiD. Keep blocks long enough to smooth hourly seasonality, and verify parallel trends pre‑test.

-

The test is underpowered.

- Revisit MDE, extend duration, increase traffic, or apply CUPED with pre‑period covariates if available. Use updated calculators like Evan Miller’s or Statsig’s to re‑plan.

-

Returns are eroding my gains.

- Shorten the attribution window for email/SMS, tighten eligibility (e.g., exclude fragile or high‑return categories), and optimize creative to set expectations.

—

Copy‑paste formulas and checkpoints

Formulas

- Attach rate = orders_with_addon / eligible_orders

- Δattach = attach_rate_T − attach_rate_C

- IREO = (addon_revenue_T − addon_revenue_C) / eligible_orders

- IMEO = (addon_margin_T − addon_margin_C) / eligible_orders

- ToTT_rev_per_adopter = IREO / attach_rate_T

- DiD uplift = (Y_T,post − Y_T,pre) − (Y_C,post − Y_C,pre)

- CUPED adjustment = Y − θ × (X − mean(X))

Checkpoints

- Eligibility frozen and documented? Yes/No

- Exposure logging verified on Thank‑you/confirmation surfaces? Yes/No

- Refunds netted within window in BI? Yes/No

- Guardrails within bounds? Yes/No

- Sample size reached and run covered full weeks? Yes/No

—

References (authoritative, 2024–2025)

- Shopify Checkout Extensibility docs and targets for Thank‑you page instrumentation: Shopify Checkout UI Extensions, Customize footer/Thank you targets, and Instructions API 2024‑07.

- BigCommerce APIs and templates for order confirmation logging and reconciliation: BigCommerce GraphQL Storefront reference and Refunds API.

- GA4 purchase event and item arrays for modeling add‑ons: GA4 eCommerce events.

- Experimentation and power planning: Optimizely A/B testing ideas; Evan Miller sample size; Statsig on determining sample size.

- CUPED and variance reduction: Optimizely on CUPED; Statsig CUPED method.

- Geo/switchback and DiD context: Statsig marketplace experiments guide and related posts.

- Guardrails and monitoring: Statsig on guardrails; Pulse time‑series insights.