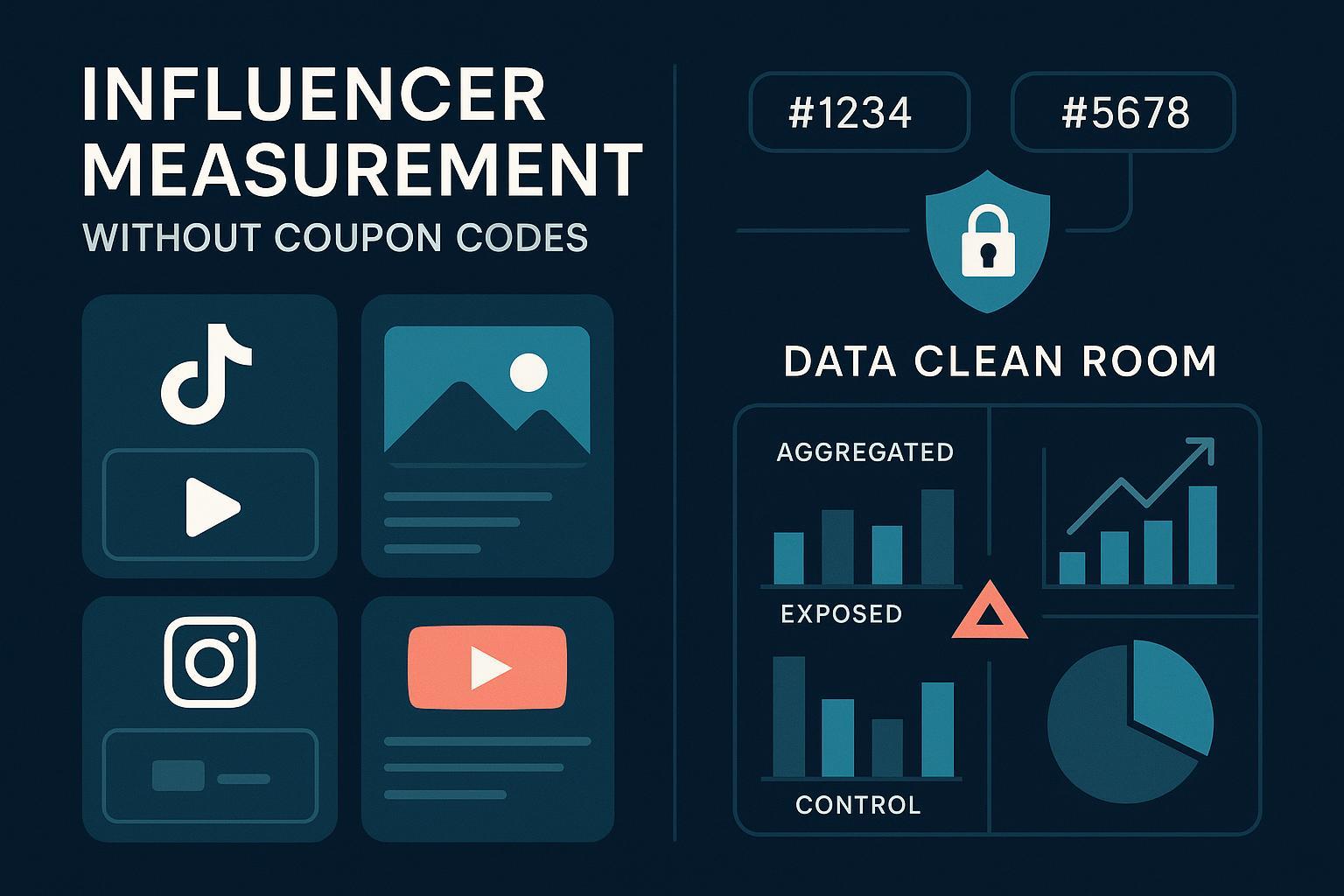

If you’re still proving influencer ROI with coupon codes and last‑click links, you’re leaving accuracy—and budget—on the table. In 2025, the bar is privacy‑first, multi‑channel, and incrementality‑driven. This guide distills what’s worked for my teams: using data clean rooms to match exposures to outcomes and running uplift tests to quantify real business impact—without relying on promo codes.

Key outcomes you can expect from the approach below:

- Privacy‑safe, deterministic audience matching with first‑party consented data

- Clear incrementality estimates (exposed vs. control) that survive executive scrutiny

- A workflow you can scale across creators and channels

Why Coupon Codes Fail as “Attribution”

Coupon codes were a useful shortcut. They’re also noisy and easily gamed:

- Cross‑channel contamination: Users see an influencer post, search Google, click a Shopping ad, and still use the code. The influencer gets credit for non‑incremental behavior.

- Code leakage: Forums and aggregator sites spread codes to non‑exposed audiences.

- Platform bias: In‑app browsing and delayed conversions break code‑only attributions.

When regulators and platforms force privacy‑preserving data practices, coupon‑based attribution becomes both weak and risky. The measurement stack has to evolve.

The Maturity Journey (2025 Lens)

- Coupon codes and last‑click links → 2) UTMs with analytics dashboards → 3) Post‑purchase surveys → 4) Audience matching with hashed identifiers → 5) Clean rooms + uplift testing.

The last step gives you two critical advantages: privacy‑safe data collaboration and causal impact measurement.

Quick Primers (No Jargon)

- Data Clean Room (DCR): A secure environment where two or more parties match and analyze first‑party data without exposing raw PII, with strict aggregation controls. See the concise definitions and use cases in Snowflake’s “What is a data clean room?” and the workflow benefits in Snowflake’s article on unlocking insights with data clean rooms.

- Uplift (Incrementality) Test: A controlled measurement comparing users exposed to an influencer campaign vs. a similar control group that wasn’t exposed, estimating the incremental lift attributable to that exposure. For a rigorous marketing overview, see the Measured “Incrementality Measurement for Marketers” guide (PDF) and the INCRMNTAL explainer on incremental lift.

- Interoperability & Standards: In 2025, IAB Tech Lab finalized the Attribution Data Matching Protocol (ADMaP) to enable privacy‑centric, interoperable attribution using authenticated first‑party data and privacy‑enhancing tech.

Step‑by‑Step: Influencer Tracking Without Coupon Codes

This is the exact operational workflow we use for privacy‑safe influencer measurement across platforms. Adapt steps to your stack.

1) Data Preparation and Consent

- Collect and normalize your first‑party conversion data: order ID, timestamp (UTC), revenue, product, and a consented identifier (hashed email/phone with salt). Keep your consent records aligned with region‑specific laws (GDPR/CPRA) and platform requirements.

- From influencer partners/platforms, secure exposure logs: content ID, creator handle, post timestamp, platform, and matched audience identifiers where allowed (often via a platform/partner integration). No raw PII should be exchanged—only hashed IDs inside the clean room.

- Document data provenance and consent attestations. Platforms like Google’s Ads Data Hub enforce policy‑specific data isolation and consent checks; see the ADH release notes (Jan–May 2024 updates) and user‑provided data matching guidance.

Practical tip: Maintain a field map and unit tests to catch timezone drift, currency mismatches, and duplicate IDs before loading anything into a DCR.

2) Choose the Right Clean Room

You’ll likely combine an enterprise DCR with walled‑garden environments:

- Enterprise/Interoperable DCRs: LiveRamp’s Habu (cross‑cloud) and Safe Haven are built for data collaboration with identity resolution; see LiveRamp’s Habu acquisition announcement and IDC MarketScape leadership overview. InfoSum offers decentralized collaboration (no data movement) used by major media partners; see InfoSum’s Samsung Ads AU launch and InfoSum’s collaboration with Choreograph/GroupM.

- Walled Gardens:

- Google Ads Data Hub (ADH) joins your first‑party data with Google ad events; outputs are aggregated; see the ADH intro and marketer query guides.

- Amazon Marketing Cloud (AMC) enables secure planning and measurement with Amazon signals; 2025 updates include a purchase signal lookback expansion to 5 years and integration of Amazon Live signals for live shopping measurement. AMC eligibility expanded in 2024; see the eligibility update and the Ads Data Manager beta for 1P onboarding.

Selection criteria I use:

- Data governance (aggregation thresholds, query auditing), interoperability, identity options

- Availability of partner/platform integrations relevant to your channels

- Cost and team skill fit (SQL‑heavy vs. templated workflows)

3) Governance: Set the Rules Before the Analysis

- Enforce aggregation thresholds (e.g., k‑anonymity minimum row counts) so no cohort can re‑identify a person. Most DCRs enforce this by design; see Snowflake’s privacy controls in its DCR fundamentals.

- Define allowed queries and output schemas. Lock down any free‑form joins that could leak identity.

- Record consent jurisdictions and ensure cross‑border transfer compliance. For EU–US data flows, confirm your vendors participate in the Data Privacy Framework; the European Commission’s 2024 first periodic review of the EU–US DPF affirmed adequacy into 2025.

4) Build Deterministic Cohorts in the Clean Room

- Exposed: Users deterministically matched to influencer content exposures within your lookback window (e.g., 7–30 days). Include platform, creator, and content identifiers.

- Control: A matched cohort with similar propensity to convert but no recorded exposure during the same window. Techniques:

- User‑level randomized holdouts when feasible (gold standard)

- Matched geo/cell tests when user‑level randomization isn’t possible

- Carefully designed pre/post periods as a last resort (control for seasonality)

For methodology depth, see Measured’s incrementality testing guide and INCRMNTAL’s lift overview.

Contamination controls:

- Exclude anyone with any signal of exposure in the control (cross‑platform deduping inside the DCR)

- Freeze cohorts after start to avoid mid‑test drift

- Monitor natural spillover—geo tests need guard zones between markets

5) Powering the Test: Minimum Detectable Lift (MDL)

- Compute MDL based on your baseline conversion rate, expected lift, and desired power/confidence. Too small and you’ll chase noise; too broad and you’ll burn time/budget. The MDL framework is detailed in the Measured guide. As a rule of thumb, start with at least 2–4 weeks for eCommerce cycles and confirm you have enough exposed users per creator/cluster to clear thresholds.

6) Execute and Log Everything

- Pre‑register your test design (even a one‑pager). Define success metrics: incremental conversions, revenue, new‑to‑brand rate, AOV lift, and payback.

- Run the influencer content per plan and maintain an exposure ledger (creator, content, spend, dates) to reconcile with platform logs.

- Ensure your clean room job schedules capture exposures and conversions on a consistent cadence (daily), with immutable snapshots for auditing.

7) Analyze Inside the Clean Room; Export Only Aggregates

- Primary estimates: difference‑in‑means or regression‑adjusted lift between exposed and control cohorts, with confidence intervals.

- Segment by creator, platform, content format, and audience attributes (age buckets, geo, new vs. returning) where privacy thresholds allow.

- Keep the raw joins, identity pointers, and user‑level paths confined to the DCR; only aggregated tables leave.

In walled gardens, rely on their privacy‑safe environments and templates where available—e.g., Google Ads Data Hub marketer query patterns. For Amazon ecosystems, leverage AMC’s expanded lookback window for LTV/new‑to‑brand analyses.

8) Triangulate with Privacy‑First Trends

The industry is shifting away from third‑party cookies and toward API‑based attribution. Google’s Privacy Sandbox timeline continues into 2025 with adjusted third‑party cookie phase‑out plans; see Privacy Sandbox’s 2025 next steps and the broader phase‑out plan update. Align your GA4 and ads measurement to support these APIs over time.

9) Turn Results into Decisions

- Compute incremental ROAS (iROAS) and cost per incremental conversion by creator/platform.

- Reallocate budget: double down on creators with proven lift; pause those with none or negative incremental return.

- Create program rules: minimum cohort size, default test duration, and retest cadence.

A Synthesized Case Walkthrough (Method Over Hype)

Context: Mid‑market D2C brand running TikTok, Instagram, and YouTube creator collaborations. Goal: quantify incremental new‑to‑brand orders and revenue without coupon codes.

Steps taken:

- Data ingestion: eCom orders with hashed emails (SHA‑256 + salt), revenue, products, and consent flags; platform exposure logs via official partners; creator/content metadata.

- Clean room setup: Enterprise DCR to join first‑party data with influencer platform signals; walled gardens used for channel‑specific analyses.

- Cohorts: User‑level holdouts for a subset of the audience; matched geo tests for the rest where user‑level randomization wasn’t possible.

- MDL: Based on historical baseline (1.8% sitewide CVR for similar cohorts), we sized the test to detect a mid‑single‑digit lift with 80% power.

- Execution: 5‑week run; content calendars locked; daily exposure and conversion snapshots; k‑anonymity thresholds at 100.

- Analysis: Regression‑adjusted lift by platform and creator cluster; triangulated with pre/post trends for sanity.

What we learned:

- View‑through exposure mattered more than link clicks for short video; strict last‑click would have missed the effect entirely.

- Creator fit and frequency beat raw reach. A few mid‑tier creators delivered more incremental lift than top‑reach accounts.

- Geo spillovers can hide or inflate effects—guard zones between test/control DMAs reduced bias.

What we changed next:

- Standardized a quarterly uplift cadence for top creator clusters.

- Introduced an “exploration” budget to test 20% new creators under the same measurement guardrails.

- Built a shared ops checklist (below) to avoid repeating setup mistakes.

Legacy vs. Modern Attribution: Honest Trade‑offs

- Coupon/Last‑Click

- Pros: Simple, fast, low cost

- Cons: Misattributes cross‑channel behavior, code leakage, easily gamed, not privacy‑forward

- Clean Rooms + Uplift

- Pros: Privacy‑safe, deterministic matching, causal impact estimates, scalable across channels

- Cons: Requires data engineering/analytics skills, testing discipline, and platform partnerships; costs more upfront

Use clean rooms and uplift when:

- You have sufficient first‑party identifiers and consent

- You can reach MDL with your audience size and campaign cadence

- You operate across multiple platforms or sell offline/marketplaces (where codes fail)

Stick to simpler methods when:

- You lack consented identifiers or can’t meet privacy thresholds

- Your scale is too small to power a test (consider qualitative/brand lift proxies until you scale)

Tools and Resources (Shortlist I Trust)

- Clean rooms/data collaboration: LiveRamp + Habu (product and acquisition overview), LiveRamp Safe Haven/IDC leadership, InfoSum partnership examples, Snowflake DCR fundamentals

- Walled gardens: Google Ads Data Hub intro and marketer query guide; Amazon Marketing Cloud 5‑year lookback update and Amazon Live signals integration

- Incrementality: Measured marketer guide (PDF), INCRMNTAL: What is incremental lift?

- Standards and privacy: IAB Tech Lab ADMaP (2025); EU–US Data Privacy Framework 2024 review; Privacy Sandbox 2025 next steps

Common Pitfalls and How to Avoid Them

- Identity mismatch and low match rates

- Problem: Different hashing/salting, email normalization issues (case sensitivity, dots), or phone formats cut your match rate.

- Fix: Standardize normalization before hashing; agree on hash algorithm and salt exchange in your DCR governance; run match‑rate diagnostics on synthetic data inside the DCR.

- Control contamination

- Problem: “Control” users accidentally get exposed via cross‑channel spillovers or creators posting outside the plan.

- Fix: Lock control cohorts; add guard zones in geo tests; set creator posting SLAs; run exposure audits mid‑test.

- Insufficient power

- Problem: Test ends with wide confidence intervals; stakeholders don’t buy the result.

- Fix: Calculate MDL up front; consolidate creators into clusters; run longer; or rotate sequential geo tests to accumulate power (as advocated in the Measured guide).

- Privacy threshold failures

- Problem: Reports fail because cohorts don’t meet k‑anonymity thresholds; exports get blocked.

- Fix: Aggregate to higher levels (creator cluster vs. individual), extend duration, or widen windows responsibly while maintaining test integrity. Snowflake’s guidance on clean room privacy fundamentals is a useful reference.

- Over‑indexing on a single platform’s lift

- Problem: Platform‑measured lift doesn’t translate into total business impact.

- Fix: Triangulate with your enterprise DCR where possible; validate cross‑channel effects and cannibalization.

- Ignoring offline and marketplace sales

- Problem: Influencer demand shows up in retail/POS or marketplaces, invisible to your site analytics.

- Fix: Bring POS/CRM/retailer feeds into your DCR; for Amazon, leverage AMC’s extended lookback for new‑to‑brand/LTV views.

Ops Checklist You Can Copy

- Data

- [ ] 1P conversions with hashed IDs (SHA‑256 + salt), consent flags, timestamps in UTC

- [ ] Exposure logs per platform (content ID, creator, timestamp, placement)

- [ ] Normalization tests for IDs, timezones, and currencies

- Governance

- [ ] Aggregation thresholds (k‑anonymity) documented/enforced

- [ ] Allowed queries and output schemas pre‑approved

- [ ] Cross‑border data transfer and DPF participation checked

- Testing

- [ ] MDL calculated; test length and sample size validated

- [ ] Exposed and control cohort definitions frozen pre‑launch

- [ ] Contamination monitoring in place (mid‑test audits)

- Analysis & Reporting

- [ ] Lift estimates with CIs; segment by creator cluster and platform

- [ ] iROAS and cost per incremental conversion computed

- [ ] Decision rules for budget reallocation agreed with stakeholders

The Bottom Line

Promo codes can’t answer the question your CFO is asking: what did this creator change that wouldn’t have happened anyway? Clean rooms give you the privacy‑safe rails to connect exposures to outcomes; uplift tests give you causal answers you can take to planning. Start small, power your tests correctly, enforce governance, and scale the workflow once you trust the results.

For technical reference as you implement: the IAB Tech Lab’s 2025 ADMaP for interoperable attribution, Snowflake’s clean room fundamentals, Google Ads Data Hub documentation and updates, Amazon Marketing Cloud feature updates, the EU–US DPF 2024 review, and the Measured incrementality guide.